This post sets Cookies due to the Tableau embeds.

This is a long post! Coming up:

- Why we do what we do

- A bit about how we do development

- Changes we’ve made related to performance to deliver Version 4, and

- How we’ve measured the results of that

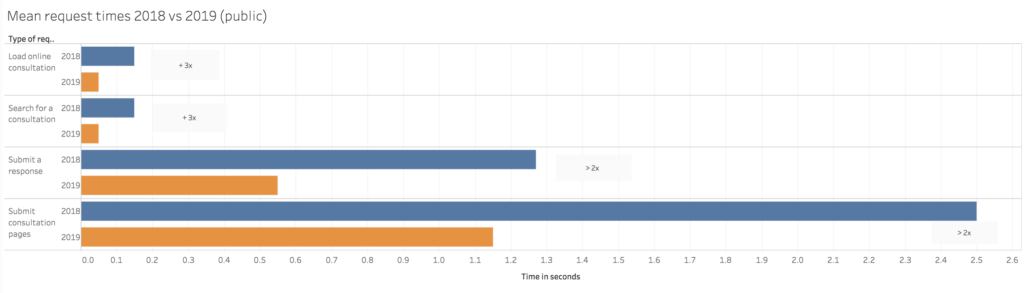

tl:dr Citizen Space is now twice as fast and can cope with double the amount of things at once.

Intro

Lots of democratic decision-making bodies around the world use Citizen Space to connect with citizens, most often in the form of consultations (but not just those). They also use it to improve the quality of work around consultation and engagement inside their organisation. This means it handles a lot of information:

In the past year, approximately 1,080,000 responses were submitted to exercises run via Citizen Space, and currently around 15,000 people working in public service around the world are registered as administrators on Citizen Space sites across 124 different organisations.

It’s our main job to make sure that:

- The information stored within customer sites is kept safe

- Respondents are not prevented from submitting their views

- The work of all those public servants is made easier rather than harder

Performance underpins all of this and has been the focus of the last few months.

How we do development

Our product development regularly focuses on incremental improvements multiple times a year, mostly through visible product features and updates which are largely suggested by our users.

There’s a lot of other stuff which competes for our time and it’s a balancing act of keeping the products moving forward while meeting regularly-changing security obligations, complying with (and proving compliance with) changes to data protection and other global legislation, answering support questions from users, internal governance and so on and so on. There are only about twenty of us across the UK, Australia and New Zealand, so we have to very carefully prioritise our work to keep on top of these competing demands.

A little on performance

Consistent performance is hard. Our customers are in control of how much content goes into their platform and we don’t apply artificial limits to number of consultations, users, responses or documents customers add to their sites, this results in varying requirements on our production infrastructure [1].

Administrative tasks in particular often consume a disproportionate amount of capacity as they can involve searching, annotating and exporting thousands of consultation responses and their supporting documents. If you have lots of people doing those things at once — and it’s the nature of this kind of tool that you do — that’s a big load for a server. Combining that with multiple active consultations and a constant stream of people visiting and submitting their views – a moving target which can peak at hundreds, and occasionally thousands, of requests a second – results in a constantly shifting workload where even small performance gains can have a big effect on overall throughput and time spent waiting.

What did we want for version 4?

Our aim was to improve on performance for Citizen Space so that it increased its capacity to perform well both for day-to-day needs and at peak times. We wanted to:

- Increase the number of people being able to do things at once: Concurrency = more citizens able to have their say on decisions which affect them + more admins across an organisation able to work simultaneously to get their jobs done

- To decrease the time it takes anyone to do something on Citizen Space: Speed = faster loading times, giving more time to spend on other things, saving time for the public purse, freeing up administrators, saving time for citizens who want to get on with making dinner/having a bath/going out/living life

- Improve sites’ ability to perform even under unprecedented heavy load: Availability = high-profile (often contentious) consultations do not suffer the double whammy of lots of people passionately keen to give their views and then struggling to do so on a site flapping under the pressure of sustained load

Version 4 delivery

Alongside our standard ongoing development work [2], we’d been working on preparing a large infrastructure update to Citizen Space for most of 2018 and this formed the backbone of the performance updates. Toward the end of 2018 we turned our technical attention to take a specific run at performance in our regular milestones, too, with the particular focus on delivering those concurrency, speed and availability improvements.

The updates were delivered over a series of smaller milestones released with no downtime for sites, plus one large overnight update in January 2019 with a few minutes of downtime, which comprised the main infrastructure release.

How do we know what difference these updates made?

We looked at request[3] data on all Citizen Space sites across 2018 to tell us how many times each request was made and the length of time it took for those requests to be delivered. We broke this data down by:

- Public side and admin side

- Type of request

- Total number of requests (of each type)

- Time taken to serve requests

- We also split it down by the type of demand a site is set up to cope with: small-medium, medium-high, high-very high demand

We did the same with the number of requests and time it’s taken to deliver them since we ran the upgrades, and we’ve forecast this out using the 2018 request numbers to find expected time savings for 2019 [4]. In order to make comparisons: for both 2018 and 2019 we calculated the mean time to serve each request. [5]

The results for the public side of sites are similar across all of them, so there is only one chart for those. This is because, in order to prevent those chunkier admin requests from impacting on people responding, Citizen Space is technically structured so that it broadly separates out the public from the admin side.

It’s worth stating that some admin-side requests we would expect to take a number of seconds to serve because they require large multi-megabyte files to be exported, but for public side transactions we’re ultimately aiming for under two seconds on all types of request, which would make Citizen Space faster than about 70% of the world wide web. Either way, we still want both the chunky requests and the already-pretty-fast ones to improve and continue improving.

The results

Citizen Space is now twice as fast

What does this mean?

1. Citizen Space can cope with at least twice as many requests at the same time (concurrency)

2. Compared with 2018, it now takes about half (and often less than half) of the time to deliver on most requests (speed)

In short: it can do more at once, and it can do it quicker.

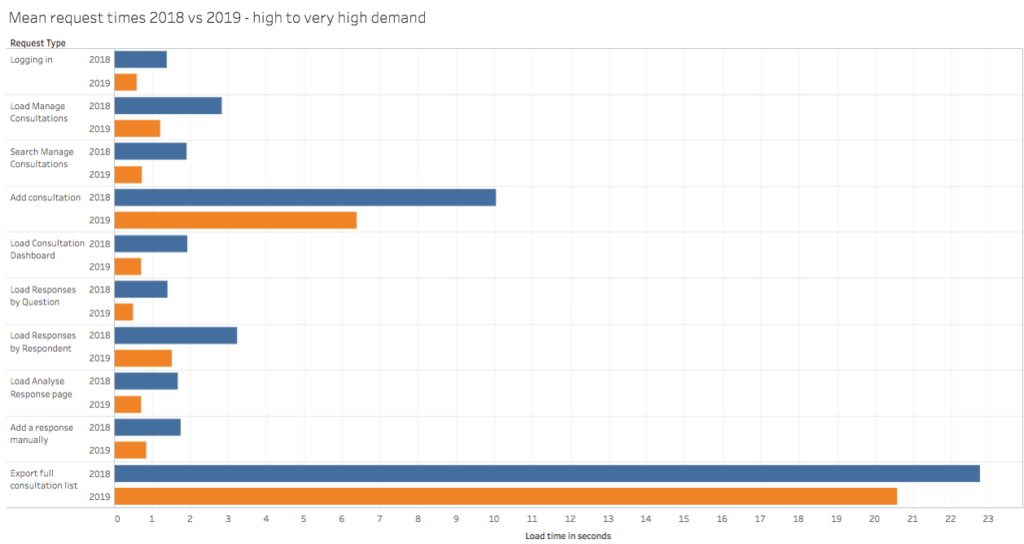

The following charts show the average time in seconds each request took to deliver, so the shorter the bar, the less time it has taken. If you hover over the bars it’ll tell you the mean response time for each type of request in 2018 (blue – first bar) and 2019 (orange – second bar). Sadly – as these are charts – they’re not great if you’re reading this on a smartphone, but I’ve put the links below each one to view them individually, or you can view the whole set together on Tableau Public (link opens in new tab).

Public side of Citizen Space

Citizen Space can now serve over twice as many requests per second i.e. it can deliver pages to double the number of respondents clicking on things at once.

See the chart on Tableau Public (link opens in new tab)

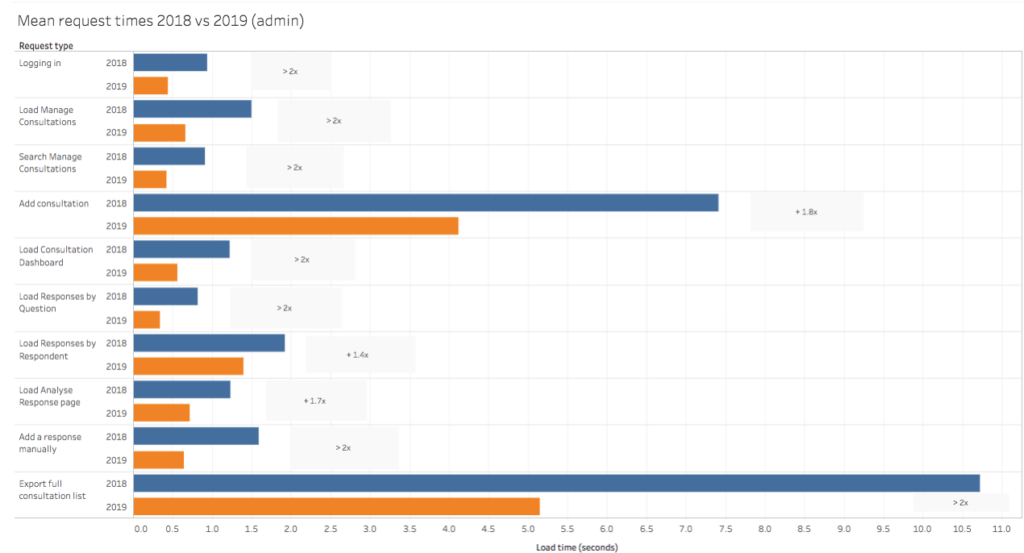

Admin side (across all sites)

See the chart in Tableau Public (link opens in new tab)

If we use the total number of requests made over the course of 2018 across all sites (approximately 2 million requests) and forecast out how long those same requests would now take in 2019 after the most recent upgrades, it’s a saving of approximately 420 hours (or approximately 56 full working days) in total across all organisations using Citizen Space, and that’s a lot of time which can be spent elsewhere.

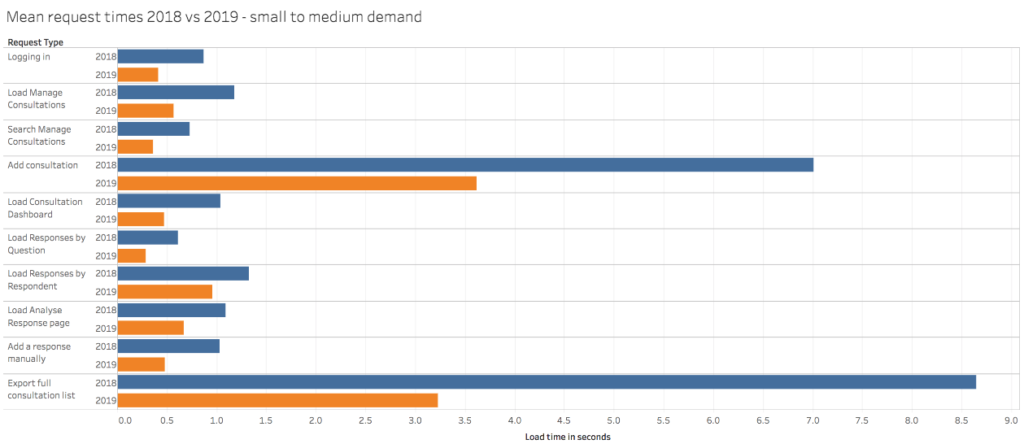

Broken down by demand

Small to medium demand sites:

See the chart in Tableau Public (link opens in new tab)

This group comprises the largest number of our customers. If we use the total number of requests made over the course of 2018 for this group (over 870,000 requests) and forecast out how long those same number of requests would now take in 2019 after the most recent upgrades, it’s a saving of almost 123 hours (or over 15 full working days).

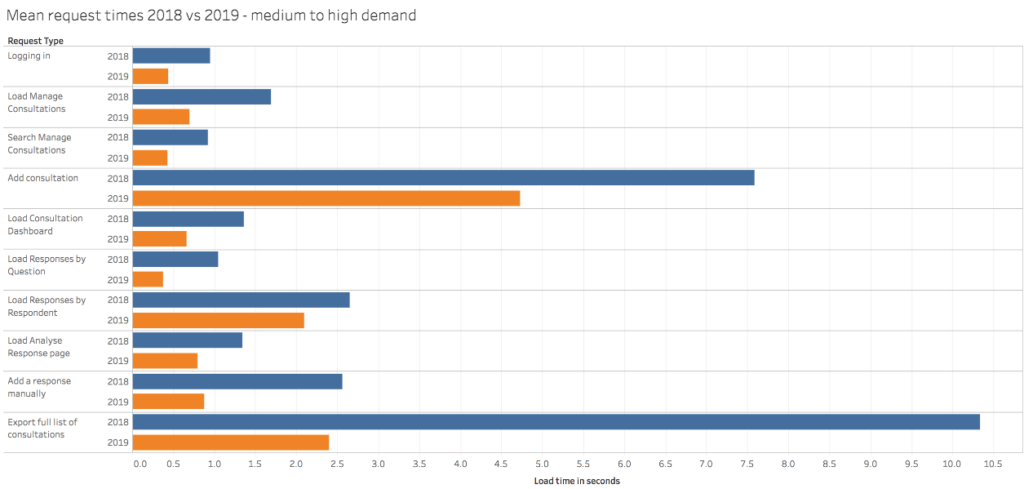

Medium to high demand sites:

See the chart in Tableau Public (link opens in new tab)

We have just under fifty organisations in this group. If we use the total number of requests made over the course of 2018 for this group (over 709,000 requests) and forecast out how long those same number of requests would now take in 2019 after the most recent upgrades, it’s a saving of almost 150 hours (or just under 19 full working days) for these organisations collectively.

High to very high demand sites:

See the chart in Tableau Public (link opens in new tab)

This is the smallest group of organisations (currently ten), but they are highly active. If we use the total number of requests made over the course of 2018 for this group (just under 470,000 requests) and forecast out how long those same number of requests would now take in 2019 after the most recent upgrades, it’s a saving of almost 150 hours (or just under 19 full working days) for these organisations collectively.

I’ve included more explanation of the time to deliver some requests in the footnotes below. [6]

In terms of the Availability goal, with Citizen Space able to do more and quicker, we expect to see fewer instances of sites struggling under very heavy load. Luckily, this isn’t a very regular occurrence, though we do track this via our site monitoring and tagged support tickets, so we’ll be looking at that data in the next few months to see if this too has seen an improvement.

What next?

We have a further release going out this week which will deliver improvements to the export of all consultations, so we should see the request times for that go down, too. This release includes other changes which we hope will add to the performance of Citizen Space, so we’ll take another look at these stats in a few months to see how we’re getting on.

As mentioned earlier, availability is a harder one to measure as it requires sites to have alerted our monitoring that there have been errors or issues, so we need more data to see what difference has been made as – thankfully – we don’t have regular instances of this. We have taken a look at the small amount of monitoring data since the upgrades and the indication is that this too has seen an improvement, but the numbers are so small that we’d rather have fuller information over a number of months to compare with 2018 to be sure.

Coming up, we’re working on meeting the new WCAG 2.1 standards. Citizen Space is currently designed to meet W3C WAI WCAG 1.0 & 2.0 Level AA.

There is much to do, let’s crack on shall we?

[1] We run a subscription model matching type of organisation and expected capacity requirements with computing provision, so that customers can have unlimited use up to the capacity of their machine.

As an example, local regional councils with lower staff numbers and a smaller likely audience for their consultations do not require the same capacity as, say, a central government department, which runs national consultations and needs more administrators across a much larger organisation. We believe this approach saves organisations from needing to limit their ability to consult, or to take a risk with sharing log-ins as might happen with a model which charges per exercise or per user. We don’t want to restrict organisations from seeking the views of citizens, nor to encourage practices which might lower security. We prefer joined-up, improved processes across an organisation where people can work together effectively. The ‘limited only by hardware’ model helps to deliver that, as the organisation is free to structure their work as they need without being concerned about an increased cost for doing so. Most organisations never need to increase the capacity they need (and therefore subscription level), if they do then we’ll purchase and provide more capacity, or they can choose to manage their content accordingly. A bit like when you run out of space on your smartphone and you can either increase your memory size or, if you don’t want to do that, then you delete some photos.

As taxpayers and citizens, we care about the public sector being ripped off by suppliers, so we work in as lean a way as we possibly can and charge just enough for each subscription band to: be able to reinvest in continually updating the software, to meet any statutory and market obligations, to pay our taxes and fair wages for hard-working people, and to keep the lights on in our offices.

[2] In 2018 our other commitments as a company tended to relate to updates and additional measures around information security, and GDPR.

[3] A request means a request to the server, such as selecting to continue on a page, clicking a button, loading a page, requesting an export — things like that.

[4] We have more people using Citizen Space now, so the number of times these pages will be requested in 2019 is likely to be higher than 2018, and therefore the speed improvements will likely be cumulatively even more significant.

[5] There was a lot of data so it’s worth noting that when calculating the mean there were some outliers: sites with large databases which took longer to serve certain requests (like the export of all consultations), and newer customers with very small databases which were quicker. To account for that, in the charts in ‘The Results’ section, we’ve included both the mean across all customers, but also set out charts for the average response times of the three groups of different site demands, from smaller organisations on sites set up for small-medium sized demand, to the largest with sites set up to cope with high-very high demand.

[6] For the handful of the very largest customers, the data shows what we’d expect, which is that requests – especially delivering exports – take quite a bit longer as this reflects the huge amount of data on their platform. These customers typically: get tens of thousands of responses to consultations, run hundreds of exercises a year, have huge peaks in traffic, and hundreds of administrators (devolved governments, very large national bodies, certain central/federal government departments). By contrast, those who are small to medium sized organisations and therefore smaller demand sites, which is the majority of our customer-base, have quicker times for those exports as the data being served is smaller. These customers tend to have regional or interest-based audience groups (the customers may be local councils, specialist regulatory bodies, etc.) lower staff numbers, and are less likely to run consultations of national interest. In the middle, we have around fifty medium to large organisations who tend to be other central/federal government departments, large local government entities, larger regulatory / health / infrastructure bodies, again with quite a bit of data to manage with most requests.